This post first ran on February 11, 2015.

In 2011, Yoshihiro Kawaoka reported that his team had engineered a pandemic form of the bird flu virus. Bird flu, also known as H5N1, has infected infected nearly 700 people worldwide and killed more than 400. But it hasn’t yet gained the ability to jump easily from human to human. Kawaoka’s research suggested that capability might be closer than anyone had imagined. His team showed that their virus could successfully hop from ferret to ferret via airborne droplets. In addition to scaring the bejesus out of many, Kawaoka’s controversial study, and a similar study by Ron Fouchier in the Netherlands, also sparked a debate about the wisdom of engineering novel and potentially deadly pathogens in the lab.

In 2011, Yoshihiro Kawaoka reported that his team had engineered a pandemic form of the bird flu virus. Bird flu, also known as H5N1, has infected infected nearly 700 people worldwide and killed more than 400. But it hasn’t yet gained the ability to jump easily from human to human. Kawaoka’s research suggested that capability might be closer than anyone had imagined. His team showed that their virus could successfully hop from ferret to ferret via airborne droplets. In addition to scaring the bejesus out of many, Kawaoka’s controversial study, and a similar study by Ron Fouchier in the Netherlands, also sparked a debate about the wisdom of engineering novel and potentially deadly pathogens in the lab.

It’s easy to see why people would be skeptical of research that aims to make pathogens that are deadlier or more transmissible than those found in nature. Marc Lipsitch and Alison Galvani outline many of the criticisms in an editorial published last year. Such experiments “impose a risk of accidental and deliberate release that, if it led to extensive spread of the new agent, could cost many lives. While such a release is unlikely in a specific laboratory conducting research under strict biosafety procedures, even a low likelihood should be taken seriously, given the scale of destruction if such an unlikely event were to occur. Furthermore, the likelihood of risk is multiplied as the number of laboratories conducting such research increases around the globe.”

But Kawaoka makes a decent counterargument. “H5N1 viruses circulating in nature already pose a threat, because influenza viruses mutate constantly and can cause pandemics with great losses of life. Within the past century, ‘Spanish’ influenza, which stemmed from a virus of avian origin, killed between 20 million and 50 million people. Because H5N1 mutations that confer transmissibility in mammals may emerge in nature, I believe that it would be irresponsible not to study the underlying mechanisms,” he wrote in a 2012 editorial.

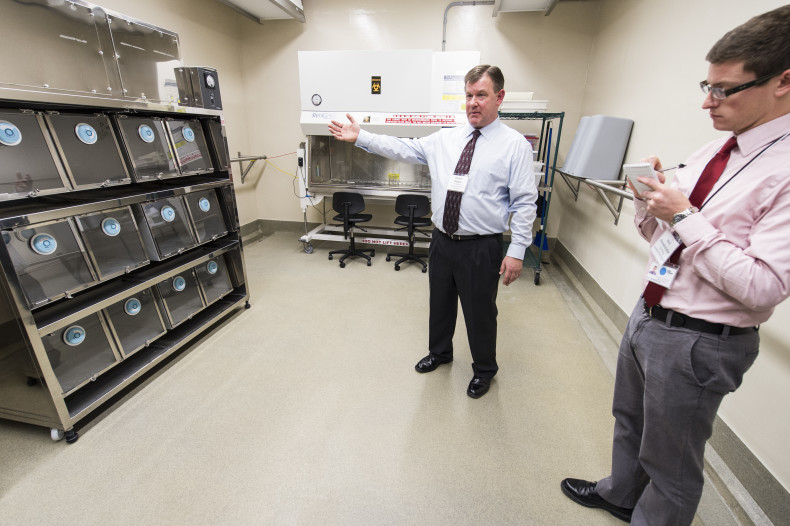

Last week I stood in the very space where Kawaoka’s ferrets fell ill, a small beige room accessible only by passing through an air lock. The air pressure is kept abnormally low in the containment facilities so that air can only flow from the outside in. Researchers typically don Tyvek suits and respirators before entering. But because the facility was shuttered for routine maintenance, I was allowed to walk through in my street clothes: No hood, no gloves, no booties. The lab had been thoroughly disinfected, but my scalp tingled with the knowledge of the viruses that had been thriving there.

Labs that work with microorganisms are classified according to biosafety levels. Microbes that don’t cause disease require few precautions. They can be safely studied in biosafety level 1 (BSL-1) laboratories. However, the most deadly and contagious pathogens, such as Ebola, must be studied in strictly regulated BSL-4 laboratories. The ferret room, part of the University of Wisconsin’s Influenza Research Institute (IRI), is what’s known as a BSL-3 Ag lab, which includes almost all of the features typically found in BSL-4 facilities. This is no slipshod operation. The air coming out the containment facility passes through multiple HEPA filters and the liquid waste gets cooked at 250 degrees for an hour, a process that makes the basement smell like warm shampoo. The effluent system alone cost a million dollars. The list of procedures for entering and exiting the facility is dizzyingly long: Before entering the lab, “the researchers are going to take all of their clothes off, including undergarments, and put on scrubs and dedicated shoes. They’re going to wear booties inside of those shoes and booties on the outside. They’re going to take a couple of towels and an extra pair of scrubs and then they’ll go into the secure corridor,” says Rebecca Moritz, select agent program manager in the Office of Biological Safety. Then they move on to another room where they put on their protective gear and enter the air lock. When they leave, they must strip down, ditch their Tyvek according to a strict disrobing protocol, and hit the showers. “Flu isn’t hardy. Soap and water is going to kill it,” Moritz says. And if the soap doesn’t kill it, the giant cooker in the basement certainly will.

Of course, no system is foolproof. Accidents do happen. I wrote about some of them in 2007: That year the UK announced that an outbreak of foot-and-mouth disease had almost certainly been the result of a virus that escaped from a nearby lab. In 2006, a researcher at Texas A&M University came down with brucellosis after disinfecting a chamber used to expose mice to the bacteria. In 2004, two researchers at Beijing’s National Institute of Virology contracted severe acute respiratory syndrome, infecting seven other people and killing one. And later that year, a Russian scientist died after accidentally injecting herself with the Ebola virus.

So the real question is this: Do the benefits of this kind of risky research outweigh the risks? The answer isn’t yet clear. In October 2014, White House Office of Science and Technology Policy and Department of Health and Human Services announced that they would stop funding studies that aim to enhance the pathogenicity or transmissibility of influenza, SARS, and MERS — so-called ‘gain-of-function’ studies — while they pause to consider the risks and benefits. But whether months, or even years, of careful deliberation can provide clarity on the issue remains to be seen. The question may simply be unanswerable. “I suspect that no one, not even WHO [the World Health Organization], has done a quantitative risk-benefit analysis of H5N1 research because it cannot be done,” writes virologist Vincent Racaniello. “What basic research will reveal is frequently unknown – if the outcome could be predicted, then it would not be research. Scientists ask questions, and design experiments to answer them, but the results remain elusive until the experiments are done. How can the benefits be quantified if the outcome isn’t certain?”