I can’t help but notice that placebos have crept into the political news in recent weeks. Okay, maybe they aren’t in the headlines but they’re there, just below the surface. That’s because when you see a headline about the Food and Drug Administration, you should immediately start thinking of placebos.

I can’t help but notice that placebos have crept into the political news in recent weeks. Okay, maybe they aren’t in the headlines but they’re there, just below the surface. That’s because when you see a headline about the Food and Drug Administration, you should immediately start thinking of placebos.

The Trump administration hasn’t named an FDA head yet, but they have laid out their priorities. Namely, to clear away the impediments between drug companies and end-users. In December, it was whispered that Trump might pick Jim O’Neill, who believes that the market should dictate which drugs get to the shelves, rather than a faceless bureaucracy. Which is a great idea when it comes to tennis shoes and sports cars, but an absolutely catastrophe when it comes to drugs.

But, you say, information wants to be free, people should feel they are in control of their own health, and life-saving drugs should be available as soon as possible. No. When it comes to drugs, this is absolutely wrong because – oddly enough – of the placebo effect.

How do I know this? Because we’ve been down this exact same road before.

I’m not going to lecture you about policy or politics, instead let me tell you a story. It centers around an odd academic argument that eventually led to the creation of what we loosely call modern (or evidence-based) medicine. In the late 1950s, the medical industry was in absolute turmoil. As the post-war United States was rapidly modernizing the growing middle class was demanding more and better health care. New health and beauty products were flooding the market every day and President Kennedy was pushing for a massively increase role for the government in medicine through Medicare.

I’m not going to lecture you about policy or politics, instead let me tell you a story. It centers around an odd academic argument that eventually led to the creation of what we loosely call modern (or evidence-based) medicine. In the late 1950s, the medical industry was in absolute turmoil. As the post-war United States was rapidly modernizing the growing middle class was demanding more and better health care. New health and beauty products were flooding the market every day and President Kennedy was pushing for a massively increase role for the government in medicine through Medicare.

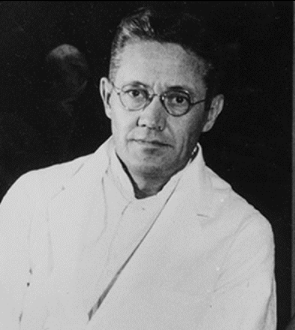

But at the same time, there was an interesting drama playing out around the efficacy of medicine in the halls of academia. It all began with legendary placebo effect pioneer (and, oddly enough, expert in psychotropic drug experiments borrowed from the Nazis and tested on unwitting US soldiers) Henry Beecher. Beecher was the one to first point out that placebo effects were a big deal and shouldn’t be ignored or explained away as the stupid things that stupid patients sometimes do.

In his surprisingly readable seminal paper, “The Powerful Placebo Effect,” he laid out a series of experiments that had been apparently scuttled by the beliefs of their subjects. My favorite of these was by Yale professor E. Morton Jellinek (never in the history of Mortons has there been a man who looked more like a Morton than E. Morton Jellinek). Old Mort was tinkering with pain drugs to see if he could eliminate certain of their more expensive ingredients.

What he found is that indeed he could. And then he found that indeed, for 60 percent of his subjects, he could eliminate all the ingredients and they wouldn’t even know the difference. In other words, the placebo worked just as well as the drug.

These people, he decided, had “psychogenic” pain and a “tendency toward suggestibility.” Unlike the “pure culture” of patients who didn’t fall for the trick. By that logic, more than half of his subjects were impure suggestible fools.

But from a wider perspective, how could we know if our drugs were working when half of us can’t tell the difference between placebo and drug? So along came one of Beecher’s students named – I kid you not – Lou Lasagna. In addition to several layers of delicious pasta, ground beef and cheese, Lasagna had a brilliant and incisive mind.

He realized that there needed to be a tool to separate effective drugs from those just taking up space on the pharmacy shelves. Believe it or not, this was a highly controversial idea at the time. Up until that time, drugs were required to be safe but not necessarily safe and effective. Soon he joined up with a senator named Estes Kefauver, who made it his mission to add “effective” to drug descriptions.

In hindsight, Lasagna could have chosen a better ally. Kefauver, among the few Southerners to back school integration, was known as the least popular man in Congress. What followed were epic hearings with Kefauver continually asking drug executives how we knew drugs were effective and the drug companies replying, hilariously, that if they didn’t work no one would use them.

Which is exactly where it would still be today, had it not been for thalidomide. The thalidomide disaster in Europe (a global panic caused by a morning sickness pill that was safe for mothers but found to cause birth defects in fetuses) had nothing to do with placebo controls or Lasagna but the fear it caused in America was intense and immediate. (Though, ironically, the US was largely untouched by thalidomide, thanks to this incredible badass.) To placate the masses, Congress to grabbed the first bill dealing with drug development they could find and made it into law. Kefauver’s bill, as it happened.

In 1962, the Drug Efficacy Amendment to the Federal Food, Drug, and Cosmetic Act passed in a single day – the fastest modern passage of a bill outside a declaration of war.

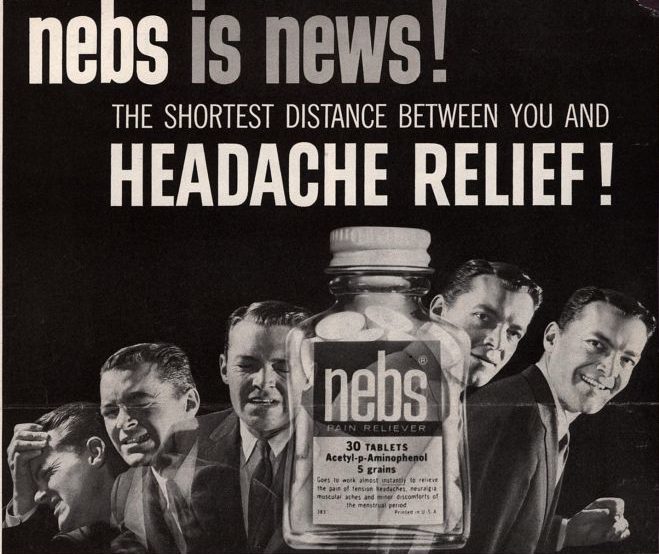

Thus was born the FDA-required double blind, placebo-controlled trial. Possibly the most expensive and difficult pieces of red tape in all of government. A simple requirement that drugs outperform a placebo. It’s not known exactly how many drugs fall victim to the Kefauver’s little rule (drug companies don’t exactly announce their failures) but in 2011, only five pain therapies resulted from the 2,000 or so registered trials. With a single drug costing as much as $2 billion to develop, it’s a very expensive speed bump.

But here’s the interesting part – and the one that we must keep in mind today. After the bill passed, there arose an interesting problem: what to do with all the drugs already in use that had never been tested against a placebo?

In 1963 the FDA created the Drug Efficacy Study Implementation (or DESI). Essentially, for the next 20 years, scientists would go through all our old drugs and make sure … well … that they worked. Technically, DESI is still in effect today (since, amazingly, a few of the drugs still haven’t been, and probably never will be, checked) but most of its drug-killing work is done. And when the smoke cleared on the battlefield, more than 1,000 drugs lay dying in the field.

One thousand drugs that the market had deemed effective but that were actually no better than a placebo. And this was back when there weren’t nearly as many drugs on the market in the first place.

The masses are good at many things. Finding planets, for instance. Or driving the world’s car markets to ever-better quality and efficiency. Or proofreading Taylor Swift’s Wikipedia page. But when more than half of us can’t pass the E. Morton Jellinek test, we are not great at picking effective drugs. It’s not that we’re stupid or that the drug companies aren’t good at their jobs. It’s that it’s incredibly hard for many (especially chronic) conditions to separate what works and what doesn’t. It’s why we spent centuries using spider webs and pigeon feces as medicine. It’s why billions of people rely on things like homeopathy or traditional Chinese medicine.

We wouldn’t crowd source the best way to do brain surgery, so why would we assume the market knows how to pick a good drug? So when people talk about streamlining drug development or removing red tape or engaging the end user, we must remember we’ve been down this road before.

I think America in fact was touched by the thalidomide crisis. I say this, not because it’s rocket science to add “largely” to that sentence, but because I was so touched by a young girl I taught who’d been a thalidomide baby and had no arms. The reason I was touched was a life lesson that has nothing to do with placebos: I pitied her until I saw how efficiently and unimpressedly she ate her sandwiches with her toes. My goodness, I thought, if she’s not pitying herself, then my pity isn’t doing her any favors. So I quit and I’ve been grateful to her ever since. And I’m REALLY glad for Erik’s badass.

On the other hand, if placebos are so very effective, why not prescribe them before any actual medicine? You’d have to keep changing the name, but if you called it something sufficiently sciencey, it might work 60% of the time!

Thanks, Ann. I’ve never actually met anyone affected by thalidomide, but then I suppose not many people have. Because by and large they died. We often think about the disaster in terms of the deformations but actually most of the victims died in the womb. I highly recommend readers to click the link above and learn about the badass who saved us all.