This past summer, I spent two weeks sitting, working and, once, sleeping next to a hospital bed, trying and failing to communicate with my father.

This past summer, I spent two weeks sitting, working and, once, sleeping next to a hospital bed, trying and failing to communicate with my father.

He had called for an ambulance on the evening of July 25 because he couldn’t breathe. With end-stage emphysema, he often couldn’t breathe, but apparently that night he was frightened enough to call for help. At the hospital, the doctors intubated him and doused him with the sedatives one needs to withstand a hard plastic tube down the throat. My sister and I never knew if he had agreed to the intubation, or if he was too weak or panicked to voice a clear opinion. Over the next few days in the ICU, although still heavily sedated, he sometimes acted in ways that seemed deliberate: he would open his eyes wide, or furrow his brow, or nod to a question or squeeze my hand. But I was never really sure. I wasn’t sure if he would have wanted us to agree to the tracheostomy procedure, on August 2, or remove the ventilator, on August 9.

What if I could have been more sure?

I couldn’t help but think about that a couple of weeks ago while having coffee with Jon Bardin at the Society for Neuroscience meeting in Washington, D.C. A few years back, Jon left the science magazine where we both worked to pursue a PhD in neuroscience. He joined the lab of Nicholas Schiff, an expert on the neural basis of consciousness, and began studying the brain activity of people with severe brain injury. And now at the conference, Jon told me, he would be presenting a poster of unpublished data suggesting that brain waves can reveal whether a somewhat conscious person is tuning in when other people speak.

Later I found Jon’s poster, one of thousands pinned on boards in the basement of the convention center. The star of his data was patient M1, a 57-year-old woman whom I’ll call Janet. Seven years ago, Janet had a stroke that left her in a ‘minimally conscious state’ until she died about a year ago. During that long hospital stay, she sometimes responded to other people — by tracking objects with her eyes or following simple commands — but never initiated an action and never spoke.

About three years ago, using a technique called electroencephalography (EEG), Jon and his colleagues put a few dozen electrodes on Janet’s scalp and recorded the brain waves emitted over a 72-hour period. Brain waves represent the synchronous activity of thousands or millions of neurons and are measured in frequency units called Hertz, or cycles per second. On a raw EEG read-out, low-frequency brain waves look fat, like a mountainscape, whereas high-frequency waves are skinny and sharp, like blades of grass. Waves of different frequencies have been tied to different biological functions. For example, somewhat slow ‘alpha’ waves, 8 to 13 Hertz, appear when eyes close.

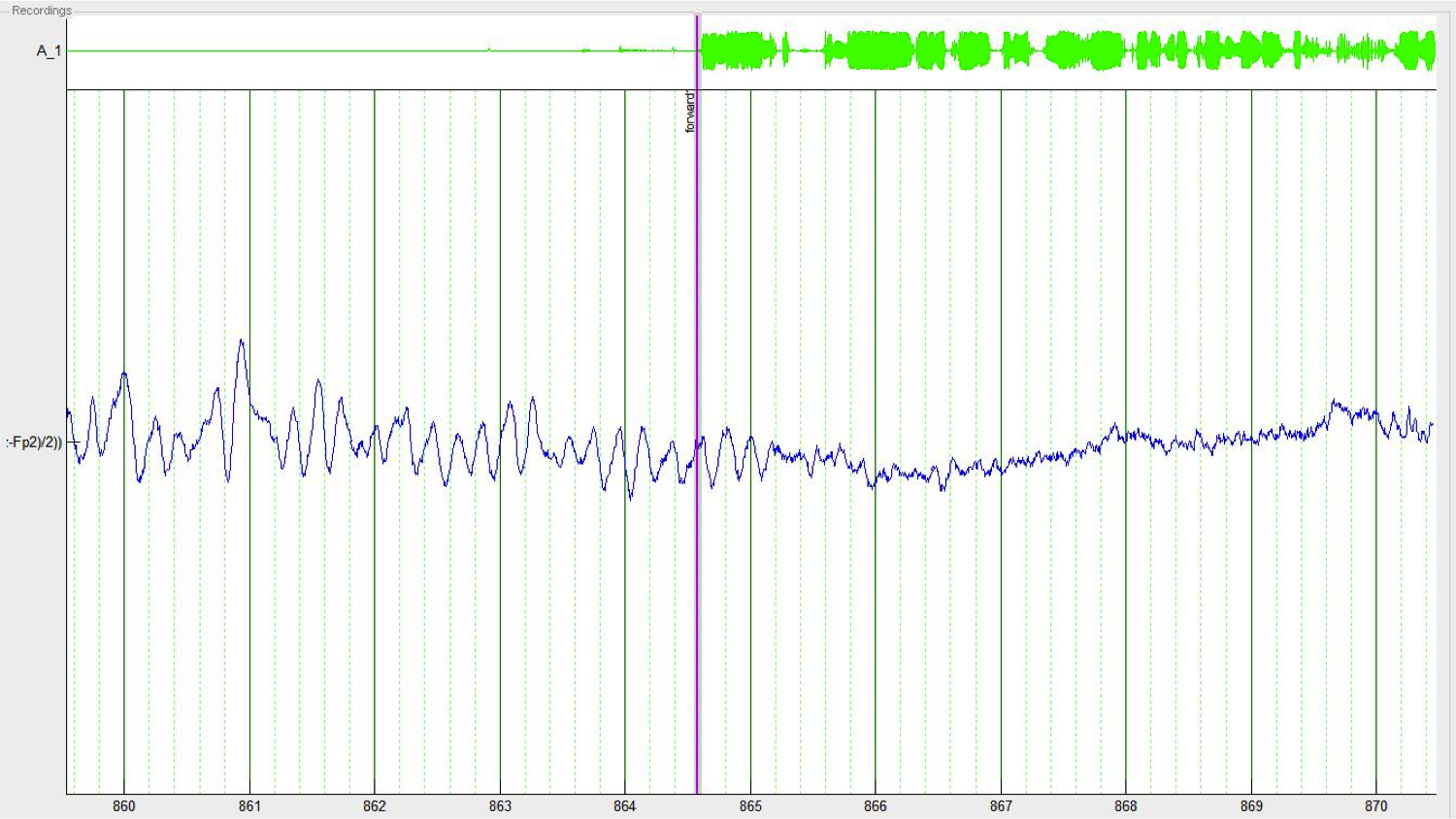

Many things happened around Janet during those three days of recording: her family came in and out, her neighbor watched television, monitors beeped, nurses gabbed. Most relevant for the experiment were the seven minutes when the researchers played a recording of her sister telling a story about a family trip to Paris. Here’s what Janet’s brain waves looked like in the seconds before and after the onset of the recording (green):

The waves switch from mountain tops to blades of grass: from low frequency to high frequency. The same change, more or less, happens when healthy people hear language.

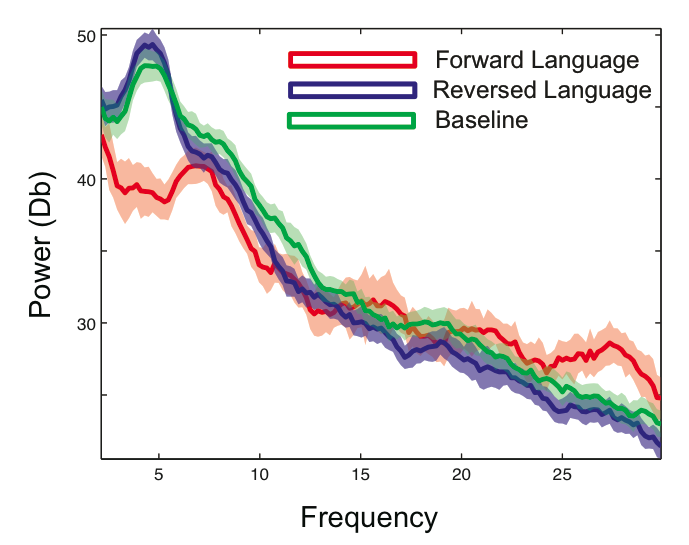

About 10 minutes later, the researchers played the same audio clip backwards — this acts as a nice control stimulus, Jon said, because it’s the same familiar voice, with the same acoustical properties, but no meaningful language. Here’s a comparison of Janet’s brain activity during each type of recording and a quiet period (baseline):

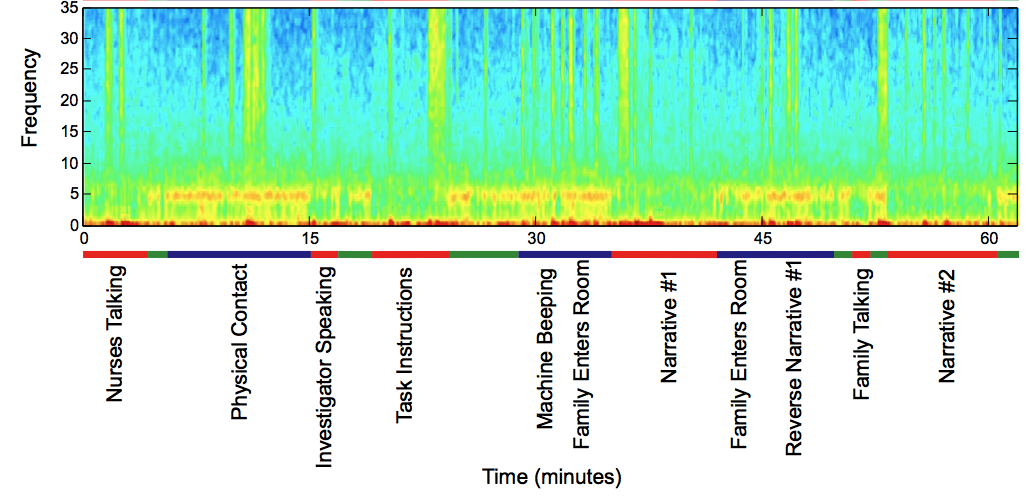

The horizontal axis shows frequency in Hertz. The vertical axis represents power, which is basically a measure of how regular the waves are: high power means consistent oscillations at that particular frequency. So Janet’s brain gave off strong 5-Hertz oscillations during baseline and the reversed audio recording, but, as the first graph showed, these low-frequency waves diminished during the normal, forward-playing story. Here’s a longer look at the data, over a 60-minute period (click for a larger view):

Every time Janet heard regular language, whether during the forward recording or others’ chatter, those 5-Hertz waves (red blips here) dropped off.

In other words, Jon’s team showed that Janet’s brain showed a continuous, robust response to language. “There’s some selective mechanism going on, where the brain is saying, I don’t care about that, I care about this,” Jon said.

The same pattern cropped up in six other minimally conscious patients from whom Jon recorded, and did not show up in two patients in a ‘vegetative state’, a diagnosis that indicates occasional movements, but no measurable awareness.

So what’s the point of all this? A cynic might say that all these pretty graphs basically tell researchers what they already knew: minimally conscious patients are, in fact, minimally conscious, and their brains are more responsive than those of patients in vegetative states.

But I suspect that these patients’ friends and family members, who spend day after day, year after year parked next to hospital beds, would feel differently. They would know that their words were somehow breaking through. As Jon put it, “While we still can’t say whether these particular subjects understand what we say to them, it seems clear from these data that they are listening.”

**

Hand photo by Janet Moore-Coll, via Flickr. Data images from Jon Bardin.

5 thoughts on “Breaking Through”

Comments are closed.